|

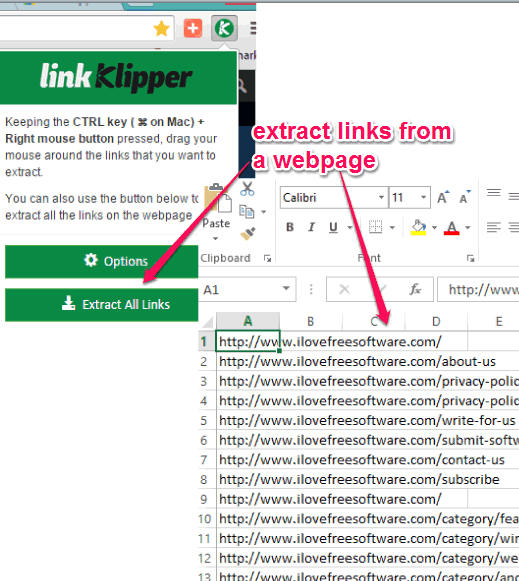

If(typeof(cell)='boolean') return cell ? 'TRUE': 'FALSE' Var urls = document.getElementsByTagName('a') Ĭonst externalLink = url.host != Copy-Paste the following JavaScript code and press Enter.Open Google Chrome Developer Tools with Cmd + Opt + i (Mac) or F12 (Windows).Step 1: Run JavaScript code in Google Chrome Developer Tools Notes: The code works also with Firefox, Safari, etc.

Just copy-paste the results into Google Sheets or with the Datablist CSV editor. The JavaScript code generates a list of URLs in CSV format with the anchor texts, and a boolean to know if the URL is internal or external to the current website. To know more about web data extraction with Python.Here is a quick JavaScript snippet to extract all URLs from a webpage fast with Google Chrome Developer Tools. We would recommend you read the official documentation of In this tutorial, you learned how to extract all website links in Python? The above program is an application of web scraping with Python. Print( Back.MAGENTA + f"Total External URLs: \n") Now, let's print all internal URLs with a green background and external links with a red background. Is the set method that adds elements to the set object. If r"" in href: #internal linkĮlif href="#": #same page target link Next, we will loop through every tag present in the As we want to extract internal and external URLs present on the web page, let's define two empty Html_page = BeautifulSoup(response.text, "html.parser")įunction will return a list of all tags present in the Now, we can parse the response HTML text using the beautifulSoup() module and find all the tags present in the response HTML page. Identifier and send a GET request to the URL. Method, let's define the webpage URL with the

Even if you write them, the two statements will have no effect. If you are on Mac or Linux, then you do not need to write the above two statements. This is because, on Windows, we need to filter the ANSI escape sequence out of any text sent toĪnd replace them with equivalent Win32 calls. We are using a Windows system, and that's why we need to mention two additional statements, Let's begin with importing the required modules. How to Extract URLs from Webpages in Python? To installįor your Python environment, run the following pip install command:Īlright then, we are all set now. This library is optional for this tutorial, and we will be using this library only to print the output in a colorful format. Library is used to print colorful text output on the terminal or command prompt. To install beautifulsoup for your Python environment, run the following pip install command: In this tutorial, we will be using this library to extract Is an open-source library that is used to extract or pull data from an HTML or XML page. You can install the requests library for your Python environment using the following pip install command: We will be using this library to send GET requests to the URL of the webpage. Is the de-facto Python library to make HTTP requests. Here is the list of all required libraries and how to install them that we will be going to use in this tutorial: However, before we dive into the code, let's install the required libraries that we will be using in this Python tutorial. Here, in this Python tutorial, we will walk you through the Python program that can extract links or URLs from a webpage. How to Extract All Website Links in Python? You do not need to buy or rely on other applications to perform such trivial tasks when you can write a Python script that can extract all the URL links from the webpage, and that's what we are going to do in this tutorial. In the creation of a sitemap if you want to generate full sitemap manually. Extract links from website and check the status if those are broken or working. There are many web applications on the internet that charge hundreds of dollars to provide such features, where they extract valuable data from other webpages to get insights into their strategies. Extract all links from a website To find out calculate external and internal link on your webpage. Let's say there is a webpage, and you want to extract only URLs or links from that page to know the number of internal and external links. Here, we will discuss how to extract all website links in Python. With the help of web scraping, we can extract that data from the webpage. A webpage is a collection of data, and the data can be anything text, image, video, file, links, and so on.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed